Recommendations for visual predictive checks in Bayesian workflow

Online preprint | Code | Preprint (arXiv)

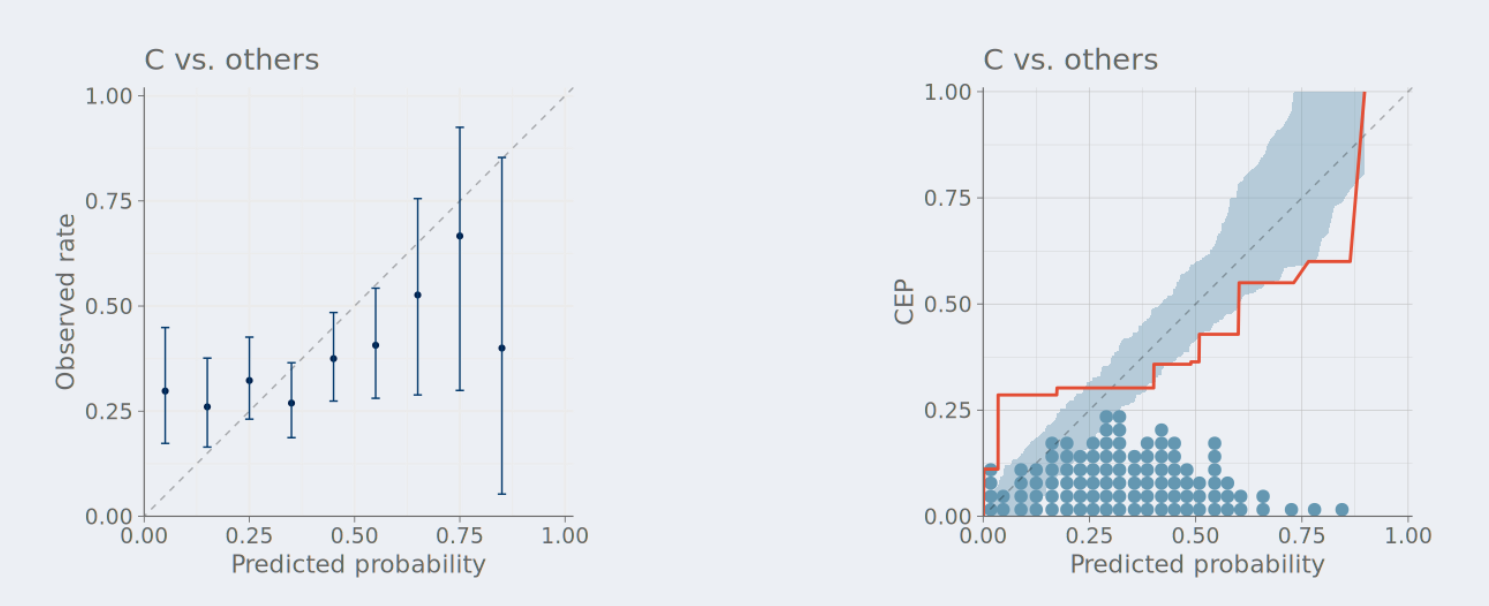

A key step in the Bayesian workflow for model building is the graphical assessment of model predictions. The goal of these assessments is to identify whether the model is a reasonable representation of the domain knowledge and observed data. Many commonly used visual predictive checks can be misleading if their implicit assumptions are not met, and there is a need for more guidance for selecting, interpreting, and diagnosing appropriate visualizations.

\(\quad\) We offer recommendations and diagnostic tools to mitigate ad-hoc decision-making in visual predictive checks. These contributions aim to improve the robustness and interpretability of Bayesian model criticism practices.

Posterior SBC: simulation-based calibration checking conditional on data

Simulation-based calibration checking (SBC) is the process of testing whether an inference algorithm and model implementation works for data generated with parameter values plausible under the chosen priors. This approach is natural and desirable for testing if the inference works for a wide range of plausible datasets.

\(\quad\) However, after observing data, we are interested in knowing if the inference works for that particular dataset. In this paper, we propose posterior SBC a version of SBC that can used to validate the inference conditionally on observed data.

\(\quad\) We illustrate the utility of posterior SBC in three case studies: (1) A simple multilevel model; (2) a model that is governed by differential equations; and (3) a joint integrative neuroscience model which is approximated via amortized Bayesian inference with neural networks.

Graphical test for discrete uniformity and its applications in goodness-of-fit evaluation and multiple sample comparison

Paper (Statistics and Computing) | Code

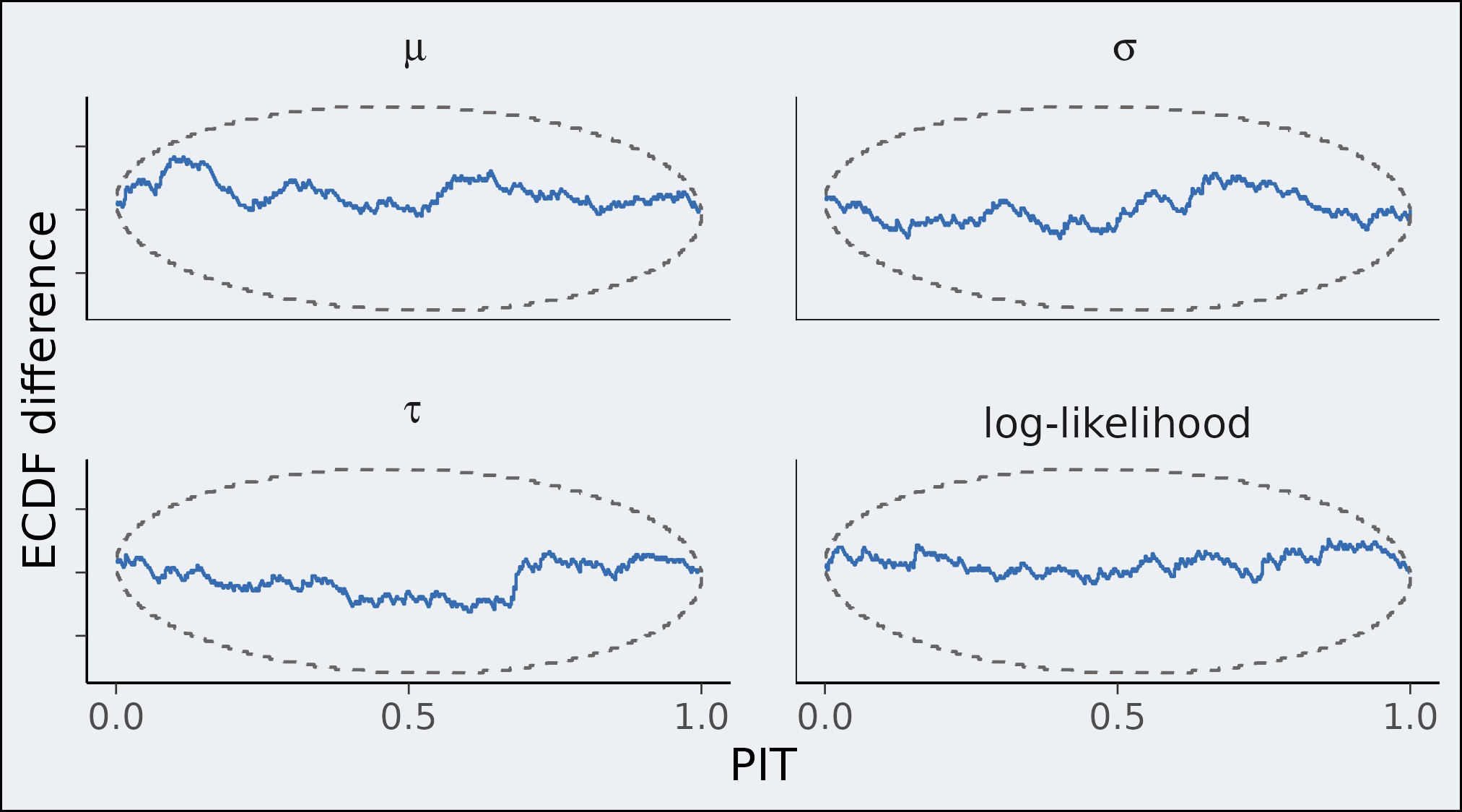

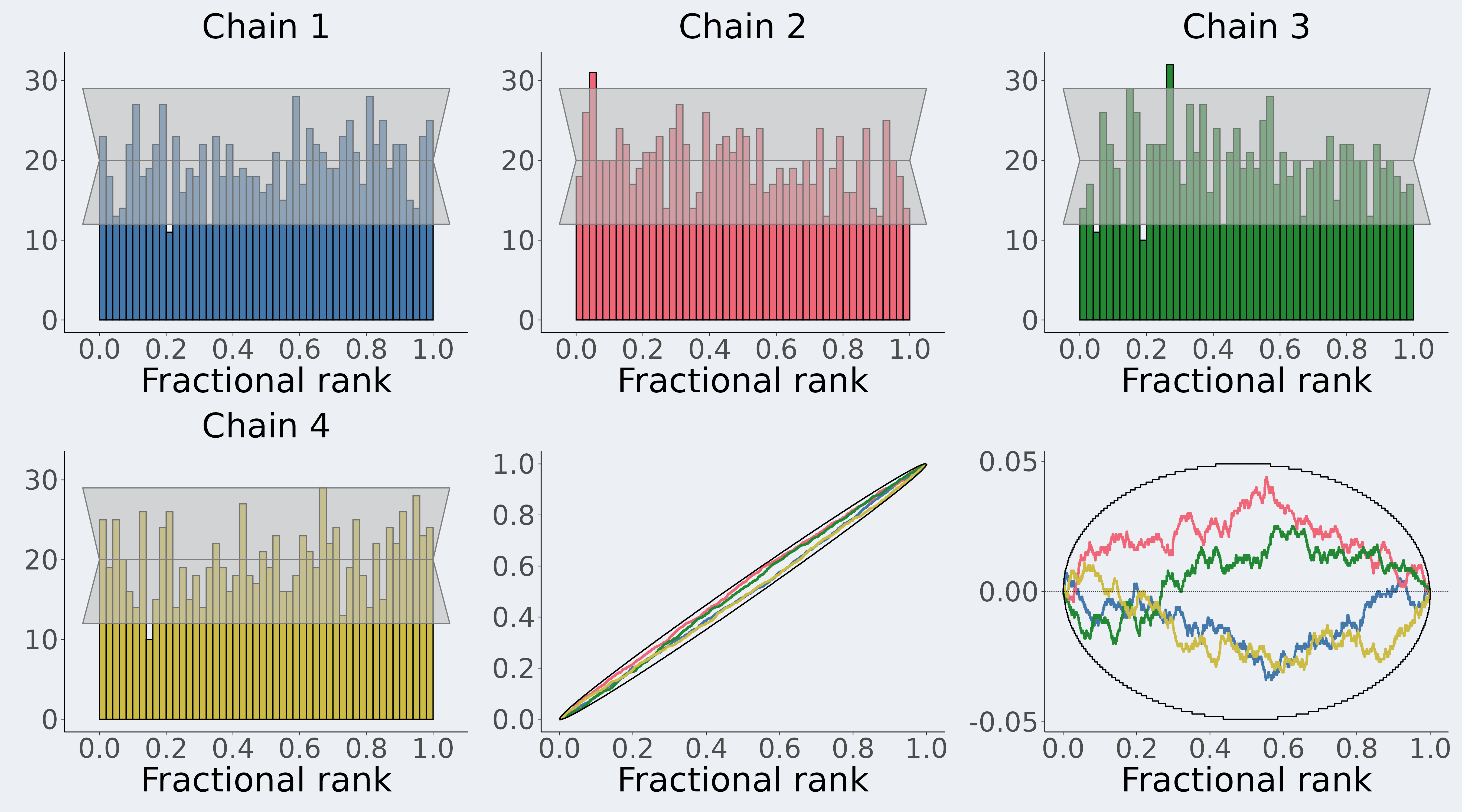

Assessing the goodness-of-fit to a given distribution is important in computational statistics. Using the probability integral transformation (PIT), we answer this question through uniformity testing.

- We present new simulation- and optimization-based methods for obtaining simultaneous confidence bands for the whole empirical cumulative distribution function (ECDF) of the PIT values under the assumption of uniformity.

- We further extend the methods to determine simultaneous confidence bands for testing whether multiple samples come from the same underlying distribution.

These methods can also be applied when the reference distribution is represented by a finite sample, which is useful, for example, for simulation-based calibration (SBC). The multiple sample comparison test is useful, for example, as a complementary diagnostic in multi-chain Markov chain Monte Carlo convergence diagnostics.